Over the past few decades, institutions ranking colleges and universities have become increasingly important. US News and World Report is the oldest college rankings institution, and generally the most well known. It's not without its detractors, however, with many critics (including President Obama in his recent push for a new way to rate colleges) citing the lack of student outcome and educational quality data as a problem. With a decision as important as where you're going to attend school and prepare for your future, it should be obvious why a variety of opinions should be consulted. Many students and families, however, find the variety of ranking types and methodologies outside of US News a bit overwhelming. To help with this issue, we decided to take a look at the different college rankings and prepare a guide for you.

The Carnagie Classification system is also used by many of the rankings. This classification divides higher education institutions into 33 more specific types of institution, with the larger categories including Associate's Colleges, Doctorate-granting Universities, Master's Colleges and Universities, Baccalaureate Colleges, Special Focus Institutions, and Tribal Colleges. Though college rankings often combine some of the smaller Carnegie classifications, the basic system allows for the comparison of like with like in college rankings.

The Carnagie Classification system is also used by many of the rankings. This classification divides higher education institutions into 33 more specific types of institution, with the larger categories including Associate's Colleges, Doctorate-granting Universities, Master's Colleges and Universities, Baccalaureate Colleges, Special Focus Institutions, and Tribal Colleges. Though college rankings often combine some of the smaller Carnegie classifications, the basic system allows for the comparison of like with like in college rankings.

The graphic above shows the large differences in ranking styles and metrics considered simply by the fact that only four ranking institutions even rank the best liberal arts colleges in the nation. In contrast Kiplinger only ranks liberal arts colleges according to what schools are the "best value." Forbes only ranks America's Top Colleges, including national and research institutions with liberal arts colleges (as well as a number of other rankings like best schools for entrepreneurs, or Christians, and so on). And the Princeton Review takes yet another stance by just listing schools, and ranking them amongst 62 smaller categories (examples of which include "great financial aid," "Town-Gown Relations Strained," or "Lots of Greek Life").

Though many of the colleges are common to most of the top ten rankings above, a bit of context in the form of methodologies goes a long way. Forbes, College Choice, and US News are the most similar, and all rely on at least a few common data sources. 22.5% of US News' ranking includes the undergraduate academic reputation, a category comprised of peer opinions of top academics (presidents, provosts, and deans of admissions) as well as the opinions of over 2,500 top college counselors. This same score is also used by College Choice and accounts for 25% of each schools rank. Graduation rates account for 7.5% of both US News and Forbes rankings, singling out many of the same top schools. Forbes also hones in on many of the schools that US News ranks highly through peer surveys with its academic success category (a category that rewards schools whose students win prestigious scholarships and fellowships or go on to earn a Ph.D.), as well as student opinions of academic quality through reviews on the site RatemyProfessor.com.

[Tweet "A bit of context in the form of methodologies goes a long way."]

Washington Monthly is the outlier above, and though it shares a few schools with all the other lists, it is ranking a largely different subset of college experience. Contribution to the public good is the principle around which the methodology of Washington Monthly's rankings stems, with the categories of social mobility, research, and service. The take away here is that if you're looking to go to a top liberal arts school that also contributes to the public good (at least according to Washington Monthly), you can choose from a college that's on both the Washington Monthly and another more traditional ranking above. If you're more interested in the social good aspect, but don't think a hyper-competitive, top school is necessarily for you, a school that's on the Washington Monthly list but not at the top of more traditional rankings might be a good fit. There's no one right way to rank top colleges. Furthermore, a diversity of ranking styles often helps students to cross examine schools and find just the right place.

[Tweet "There's no one right way to rank top colleges."]

The graphic above shows the large differences in ranking styles and metrics considered simply by the fact that only four ranking institutions even rank the best liberal arts colleges in the nation. In contrast Kiplinger only ranks liberal arts colleges according to what schools are the "best value." Forbes only ranks America's Top Colleges, including national and research institutions with liberal arts colleges (as well as a number of other rankings like best schools for entrepreneurs, or Christians, and so on). And the Princeton Review takes yet another stance by just listing schools, and ranking them amongst 62 smaller categories (examples of which include "great financial aid," "Town-Gown Relations Strained," or "Lots of Greek Life").

Though many of the colleges are common to most of the top ten rankings above, a bit of context in the form of methodologies goes a long way. Forbes, College Choice, and US News are the most similar, and all rely on at least a few common data sources. 22.5% of US News' ranking includes the undergraduate academic reputation, a category comprised of peer opinions of top academics (presidents, provosts, and deans of admissions) as well as the opinions of over 2,500 top college counselors. This same score is also used by College Choice and accounts for 25% of each schools rank. Graduation rates account for 7.5% of both US News and Forbes rankings, singling out many of the same top schools. Forbes also hones in on many of the schools that US News ranks highly through peer surveys with its academic success category (a category that rewards schools whose students win prestigious scholarships and fellowships or go on to earn a Ph.D.), as well as student opinions of academic quality through reviews on the site RatemyProfessor.com.

[Tweet "A bit of context in the form of methodologies goes a long way."]

Washington Monthly is the outlier above, and though it shares a few schools with all the other lists, it is ranking a largely different subset of college experience. Contribution to the public good is the principle around which the methodology of Washington Monthly's rankings stems, with the categories of social mobility, research, and service. The take away here is that if you're looking to go to a top liberal arts school that also contributes to the public good (at least according to Washington Monthly), you can choose from a college that's on both the Washington Monthly and another more traditional ranking above. If you're more interested in the social good aspect, but don't think a hyper-competitive, top school is necessarily for you, a school that's on the Washington Monthly list but not at the top of more traditional rankings might be a good fit. There's no one right way to rank top colleges. Furthermore, a diversity of ranking styles often helps students to cross examine schools and find just the right place.

[Tweet "There's no one right way to rank top colleges."]

Our own rankings are different from any of the other listed institutions in that they account for differences in what is required to have a quality traditional program versus a quality online program. Though graduation rate and cost per credit account for 45% of the total rankings (important quality of life and efficacy of education metrics at any institution), the next most important indicator is quality of faculty. In this measure, we first rewards schools for higher percentages of full-time faculty (as opposed to part time), taking this to mean greater expertise in their discipline. This measure is only a portion of the quality of faculty component, with the rest accounted for by the average level of experience teaching online courses that professors have. While good professors should be a boon to their schools, important skill sets for being a great online teacher can take time to develop, an important distinction we felt needed to be made for our online school rankings.

Our own rankings are different from any of the other listed institutions in that they account for differences in what is required to have a quality traditional program versus a quality online program. Though graduation rate and cost per credit account for 45% of the total rankings (important quality of life and efficacy of education metrics at any institution), the next most important indicator is quality of faculty. In this measure, we first rewards schools for higher percentages of full-time faculty (as opposed to part time), taking this to mean greater expertise in their discipline. This measure is only a portion of the quality of faculty component, with the rest accounted for by the average level of experience teaching online courses that professors have. While good professors should be a boon to their schools, important skill sets for being a great online teacher can take time to develop, an important distinction we felt needed to be made for our online school rankings.

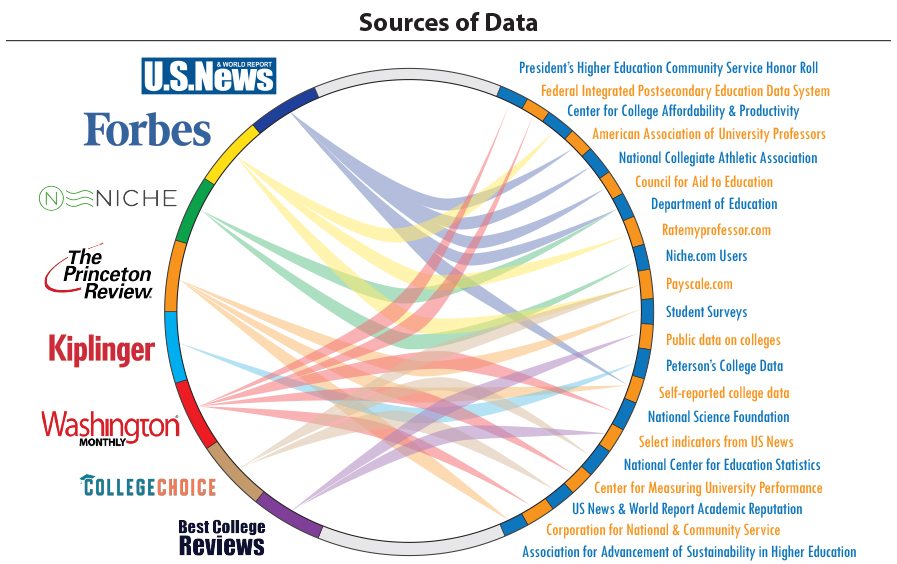

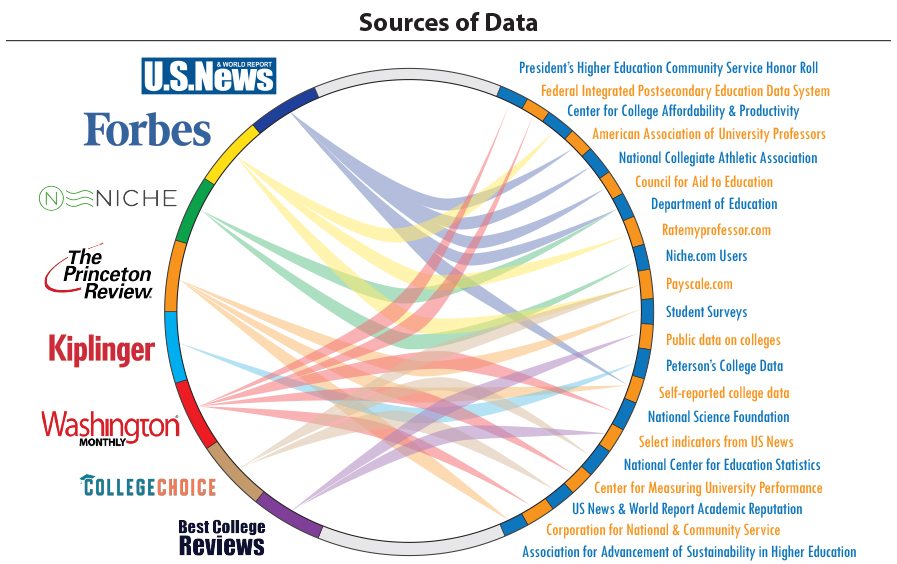

Sources of Data

The variety of data sources is a testament to the wide variety of factors valued by college rankings. The most common data sources include Payscale.com, the Department of Education, and the National Center for Education Statistics. Payscale is consulted for alumni salaries, or the starting salaries of recent graduates. The Department of Education database is often consulted for freshman-to-sophomore retention rate, average cost of attendance after financial aid, endowment data, and loan default rates for graduates. The Carnagie Classification system is also used by many of the rankings. This classification divides higher education institutions into 33 more specific types of institution, with the larger categories including Associate's Colleges, Doctorate-granting Universities, Master's Colleges and Universities, Baccalaureate Colleges, Special Focus Institutions, and Tribal Colleges. Though college rankings often combine some of the smaller Carnegie classifications, the basic system allows for the comparison of like with like in college rankings.

The Carnagie Classification system is also used by many of the rankings. This classification divides higher education institutions into 33 more specific types of institution, with the larger categories including Associate's Colleges, Doctorate-granting Universities, Master's Colleges and Universities, Baccalaureate Colleges, Special Focus Institutions, and Tribal Colleges. Though college rankings often combine some of the smaller Carnegie classifications, the basic system allows for the comparison of like with like in college rankings.

Featured Online Programs

Find a program that meets your affordability, flexibility, and education needs through an accredited, online school.

Variations in Rankings

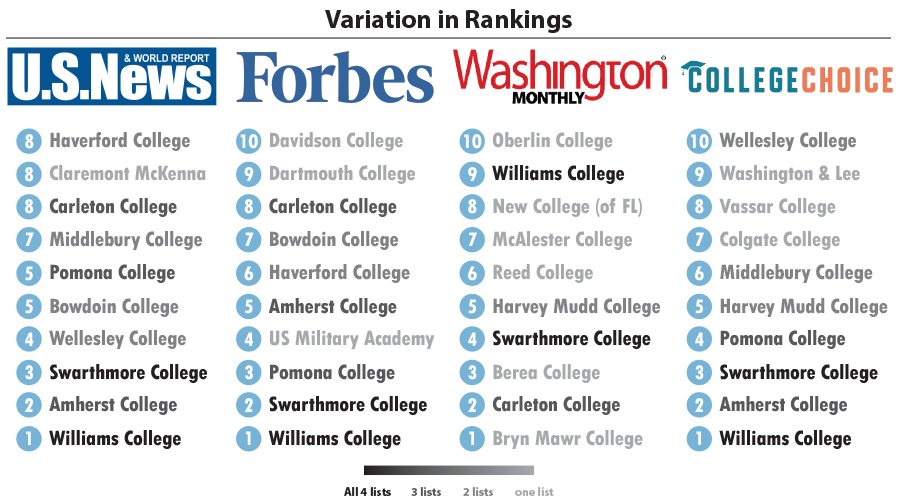

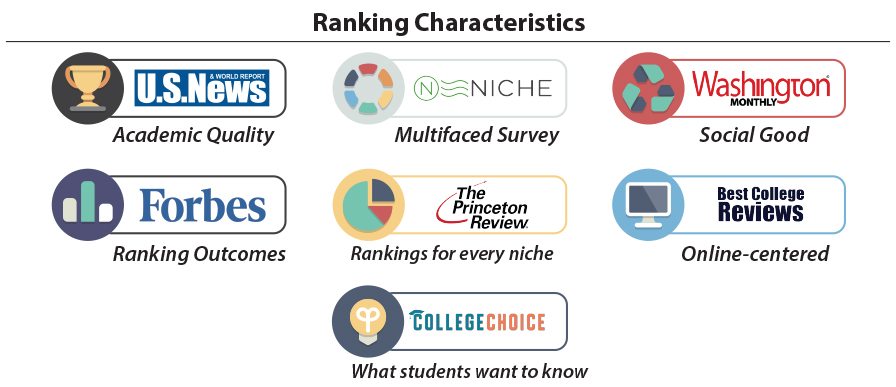

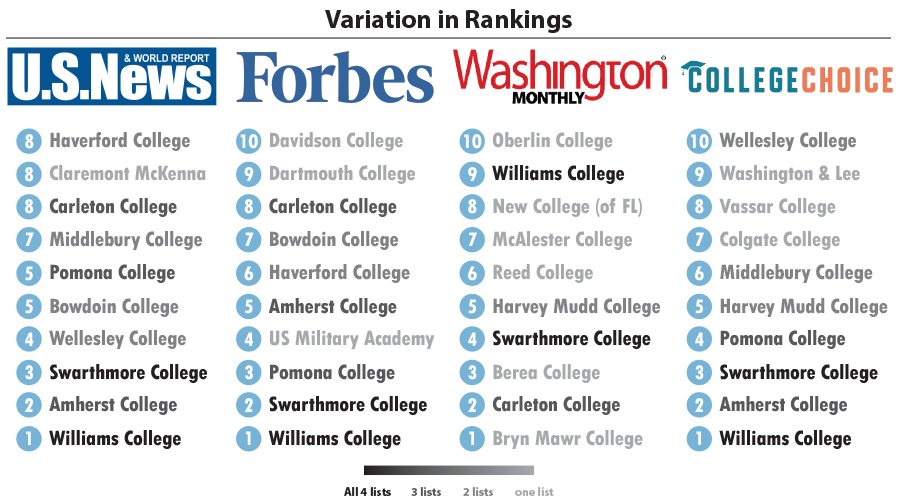

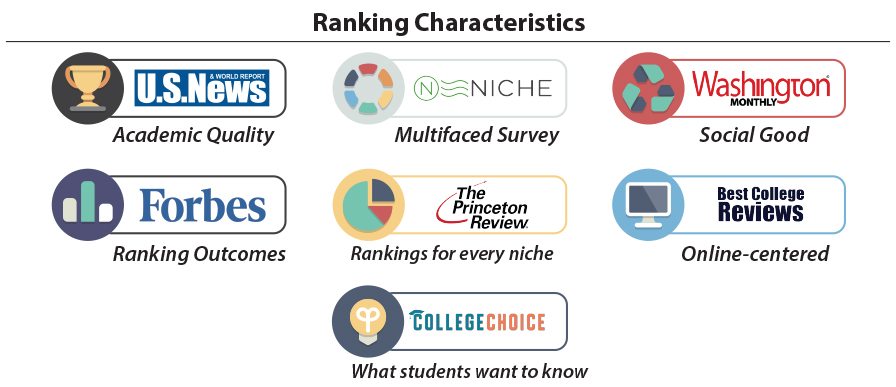

There can be large discrepancies even amongst similar rankings of the same type of schools. This may seem problematic when it translates to more applications and hype for certain schools, and many criticisms of college rankings do center on the role they play in promoting colleges to "game" the rankings by focusing on improving only a few--oftentimes arbitrary--metrics that are heavily weighted in rankings. However, when looking at the wide range of rankings available online, its much more often the case that ranking institutions are ranking completely different aspects of schools, and not simply providing slightly different ways to rank the same things. The effect is that schools must improve on a wide variety of important measures if they are to continue to appease the college ranking community and rank well according to multiple institutions. A rule of thumb for students and families regarding the variety of types of rankings available is that each should be taken with a grain of salt, and viewed simply in conjunction with others as one possible lens into what a school is like. The graphic above shows the large differences in ranking styles and metrics considered simply by the fact that only four ranking institutions even rank the best liberal arts colleges in the nation. In contrast Kiplinger only ranks liberal arts colleges according to what schools are the "best value." Forbes only ranks America's Top Colleges, including national and research institutions with liberal arts colleges (as well as a number of other rankings like best schools for entrepreneurs, or Christians, and so on). And the Princeton Review takes yet another stance by just listing schools, and ranking them amongst 62 smaller categories (examples of which include "great financial aid," "Town-Gown Relations Strained," or "Lots of Greek Life").

Though many of the colleges are common to most of the top ten rankings above, a bit of context in the form of methodologies goes a long way. Forbes, College Choice, and US News are the most similar, and all rely on at least a few common data sources. 22.5% of US News' ranking includes the undergraduate academic reputation, a category comprised of peer opinions of top academics (presidents, provosts, and deans of admissions) as well as the opinions of over 2,500 top college counselors. This same score is also used by College Choice and accounts for 25% of each schools rank. Graduation rates account for 7.5% of both US News and Forbes rankings, singling out many of the same top schools. Forbes also hones in on many of the schools that US News ranks highly through peer surveys with its academic success category (a category that rewards schools whose students win prestigious scholarships and fellowships or go on to earn a Ph.D.), as well as student opinions of academic quality through reviews on the site RatemyProfessor.com.

[Tweet "A bit of context in the form of methodologies goes a long way."]

Washington Monthly is the outlier above, and though it shares a few schools with all the other lists, it is ranking a largely different subset of college experience. Contribution to the public good is the principle around which the methodology of Washington Monthly's rankings stems, with the categories of social mobility, research, and service. The take away here is that if you're looking to go to a top liberal arts school that also contributes to the public good (at least according to Washington Monthly), you can choose from a college that's on both the Washington Monthly and another more traditional ranking above. If you're more interested in the social good aspect, but don't think a hyper-competitive, top school is necessarily for you, a school that's on the Washington Monthly list but not at the top of more traditional rankings might be a good fit. There's no one right way to rank top colleges. Furthermore, a diversity of ranking styles often helps students to cross examine schools and find just the right place.

[Tweet "There's no one right way to rank top colleges."]

The graphic above shows the large differences in ranking styles and metrics considered simply by the fact that only four ranking institutions even rank the best liberal arts colleges in the nation. In contrast Kiplinger only ranks liberal arts colleges according to what schools are the "best value." Forbes only ranks America's Top Colleges, including national and research institutions with liberal arts colleges (as well as a number of other rankings like best schools for entrepreneurs, or Christians, and so on). And the Princeton Review takes yet another stance by just listing schools, and ranking them amongst 62 smaller categories (examples of which include "great financial aid," "Town-Gown Relations Strained," or "Lots of Greek Life").

Though many of the colleges are common to most of the top ten rankings above, a bit of context in the form of methodologies goes a long way. Forbes, College Choice, and US News are the most similar, and all rely on at least a few common data sources. 22.5% of US News' ranking includes the undergraduate academic reputation, a category comprised of peer opinions of top academics (presidents, provosts, and deans of admissions) as well as the opinions of over 2,500 top college counselors. This same score is also used by College Choice and accounts for 25% of each schools rank. Graduation rates account for 7.5% of both US News and Forbes rankings, singling out many of the same top schools. Forbes also hones in on many of the schools that US News ranks highly through peer surveys with its academic success category (a category that rewards schools whose students win prestigious scholarships and fellowships or go on to earn a Ph.D.), as well as student opinions of academic quality through reviews on the site RatemyProfessor.com.

[Tweet "A bit of context in the form of methodologies goes a long way."]

Washington Monthly is the outlier above, and though it shares a few schools with all the other lists, it is ranking a largely different subset of college experience. Contribution to the public good is the principle around which the methodology of Washington Monthly's rankings stems, with the categories of social mobility, research, and service. The take away here is that if you're looking to go to a top liberal arts school that also contributes to the public good (at least according to Washington Monthly), you can choose from a college that's on both the Washington Monthly and another more traditional ranking above. If you're more interested in the social good aspect, but don't think a hyper-competitive, top school is necessarily for you, a school that's on the Washington Monthly list but not at the top of more traditional rankings might be a good fit. There's no one right way to rank top colleges. Furthermore, a diversity of ranking styles often helps students to cross examine schools and find just the right place.

[Tweet "There's no one right way to rank top colleges."]

Pros and Cons

As the most influential of rankings, US News rankings are often catered to by colleges seeking to "up" their prestige. While competition in admissions is at least partially due to a growing volume of applicants, practices such as denying overqualified applicants to boost rankings through the selectivity factor have been known to occur. As colleges rise in rankings due to selectivity, more applications follow. As more applications are received, more can be rejected, leading to a cyclical climb up rankings that has very little to do about the changing quality of a school. Another con of US News rankings is that very little outcome data is collected, and first jobs, starting salaries, and where graduates end up is often a very important factor for applicants. This being said, the US News rankings are still well respected, and does boast a wide array of specialized stats as well as polling data that is compiled into the "academic reputation" stat. While Forbes rankings attempt to fill a need unfilled by some of the other large ranking institutions, they also fall prey to some of the more dubious data sources. Rankmyprofessors.com, a site for professor ratings, accounts for a full 10% of the total rankings, skewing results through the fact that the site may not be popular amongst all demographics or at all schools. While Forbes does provide data on outcomes through the "post-graduate success," a third of the category is composed of data from Payscale.com, a site where curious individuals compare salary data (and a sometimes inaccurate tool). This being said, Forbes does fill the need for rankings that attempt to reward student outcomes and success after school. A number of very strong data sources are also used in compiling Forbes rankings, and they are definitely one of several rankings that should be consulted in a college search. The Princeton Review's college rankings are unique in that they provide rankings on perhaps the largest range of subjects. Though the institution doesn't compile an overall ranking, the feel for a school is often made even more clear by ranking topics such as "students pack the stadiums" or "most politically active students." For some, the lack of overall rankings is actually a good thing, as one common ranking complaint centers around how ranking sites can ever really say that one school should rank a few spots higher than another with any verifiability. Princeton Review rankings are a great place to start for teasing out what makes many colleges unique. Washington Monthly's rankings get at the heart of what many higher education institutions say that they're about. While their three-pronged methodology selected to rank how colleges aid the "public good" might gloss over details like how comfortable the dorms are, or academic rigor, they do provide an interesting look into some of our most important social institutions. Service, research, and social mobility are all weighted equally. Service includes the size of the Air Force, Army, and Navy ROTC programs, the number of alumni currently in the Peace Corps, the percentage of federal work-study money spent on community service, whether the institution provides scholarships for community service, and other indicators focusing on service as an administrative and academic focal point. Research looks at student outcomes in academia, and awards for alumni and faculty. Social mobility rewards schools that are good at graduating students as well as keeping costs low. While such a methodology certainly misses certain common features of campus life and the college experience, it does provide one of the most unique ways to rank schools online today. Our own rankings are different from any of the other listed institutions in that they account for differences in what is required to have a quality traditional program versus a quality online program. Though graduation rate and cost per credit account for 45% of the total rankings (important quality of life and efficacy of education metrics at any institution), the next most important indicator is quality of faculty. In this measure, we first rewards schools for higher percentages of full-time faculty (as opposed to part time), taking this to mean greater expertise in their discipline. This measure is only a portion of the quality of faculty component, with the rest accounted for by the average level of experience teaching online courses that professors have. While good professors should be a boon to their schools, important skill sets for being a great online teacher can take time to develop, an important distinction we felt needed to be made for our online school rankings.

Our own rankings are different from any of the other listed institutions in that they account for differences in what is required to have a quality traditional program versus a quality online program. Though graduation rate and cost per credit account for 45% of the total rankings (important quality of life and efficacy of education metrics at any institution), the next most important indicator is quality of faculty. In this measure, we first rewards schools for higher percentages of full-time faculty (as opposed to part time), taking this to mean greater expertise in their discipline. This measure is only a portion of the quality of faculty component, with the rest accounted for by the average level of experience teaching online courses that professors have. While good professors should be a boon to their schools, important skill sets for being a great online teacher can take time to develop, an important distinction we felt needed to be made for our online school rankings.